How to do one hot encoding in r11/22/2023  Group the categories that output similar leaf values.

Values are categories, and the measure is the output leaf value. In the context of decision trees, the discrete Measure of these values, one only needs to look at sorted partitions instead ofĮnumerating all possible permutations. Trying to partition a set of discrete values into groups based on the distances between a More specifically, the proof by Fisher states that, when Now is also adopted in XGBoost as an optional feature for handling categorical LightGBM brought it to the context of gradient boosting trees and The algorithm is used in decision trees, later Node split, the proof of optimality for numerical output was first introduced by. Optimal partitioning is a technique for partitioning the categorical predictors for each For pandas/cudf Dataframe, this can be achieved by Preparing the data, users need to specify the data type of input predictor asĬategory. Scikit-learn interface like XGBClassifier. The easiest way to pass categorical data into XGBoost is using dataframe and the Releases and this tutorial details how to inform XGBoost about the data type.

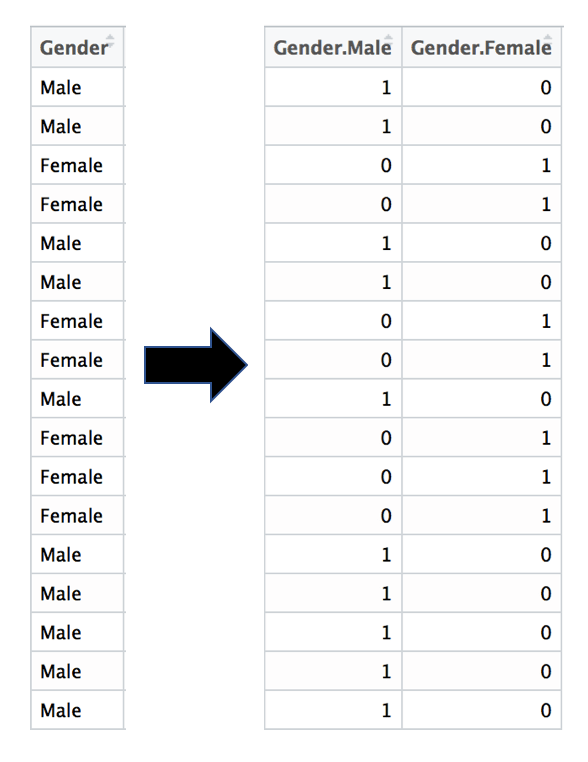

More advanced categorical split strategy is planned for future If onehot encoding is used instead, then the split is defined as

Specified as \(value \in categories\), where categories is the set of categories For partition-based splits, the splits are Threshold\), while for categorical data the split is defined depending on whether For numerical data, the split condition is defined as \(value < Starting from version 1.5, XGBoost has experimental support for categorical data availableįor public testing. As of XGBoost 1.6, the feature is experimental and has limited features

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed